Enterprise AI Is Fragmenting. Every Category Is Hitting the Same Wall.

Enterprise AI is fragmenting fast. Search AI, productivity AI, HR analytics, agent platforms, foundation models: every category is racing to own a piece of the enterprise stack. Each one is solving a real problem, adding real value, and eventually hitting the same wall.

The pattern is consistent enough to state plainly: the missing layer is not a feature any existing platform will ship. It is a category of its own.

Four enterprise AI categories, one blind spot

Four enterprise AI categories, one blind spot

The Landscape: Four Categories, Four Strengths, One Blind Spot

1. Enterprise Search & Knowledge Management

Glean, Box AI, Notion AICore strength: Indexed knowledge at scale. You ask a question, it finds the right document, surfaces the right policy, retrieves the relevant meeting note. For enterprises with sprawling information environments, that is genuinely valuable. Glean now ships 85+ agent actions and performs better on complex enterprise queries than generic AI models. These are serious capabilities.

Where they still stop: Enterprise search knows what is in the organization. It does not know how the organization actually works. Retrieving the right document is not the same as knowing whether it reflects current practice, informal authority, or the exceptions people follow in real work.

In many companies, internal documents are written by knowledge writers or documentation teams, not by the people doing the work firsthand. That makes the content useful, but not always complete enough for task automation. It may capture the official process, while missing the judgment, shortcuts, and edge cases that business teams rely on.

The same is true for meeting transcripts and recorded notes. Even when everything is captured automatically, important context is still missing. Not everything is said in meetings, and not every gap is written down. The people closest to the work usually know what the documents leave out.

So enterprise search can retrieve the archive. It still cannot fully capture the living knowledge of how the business actually runs.

2. Foundation Model Platforms (LLMs Going Enterprise)

Anthropic for Enterprise, Perplexity for EnterpriseCore strength: Powerful reasoning and generation at scale. These are not just retrieval systems. They can interpret, synthesize, and act across complex inputs, which makes them highly useful for enterprise work.

Where they still stop: Foundation models can reason, but they do not know your organization's authority structure, informal norms, or real escalation paths. They may generate a smart answer or recommend a logical next step, but they still cannot reliably tell who should approve something, who actually influences a decision, or when to defer.

That gap matters even more in enterprise settings. A model may route a security review to the correct owner by title, but not know that the person is overloaded, unavailable, or not the one others actually rely on for urgent decisions. The routing is technically correct. The outcome is still wrong.

The issue is not lack of intelligence. It is lack of organizational context. Without that, powerful models do not just make mistakes. They make confident, well-structured mistakes at scale.

3. Productivity & Communication AI

Superhuman, Slack AI, Microsoft CopilotCore strength: Workflow acceleration. These tools help people draft, summarize, triage, and move work faster across communication channels.

Where they still stop: Productivity AI faces a core tradeoff: read the content and raise security, privacy, and governance concerns, or rely on metadata alone. But metadata patterns can be misleading. Frequent interaction does not always mean trust. A fast response does not always mean importance. A meeting-heavy relationship does not always mean real decision authority.

That means the tool may see that people interacted, but not understand why, how sensitive the issue was, or what invisible relationship dynamics shaped the exchange. Metadata can show patterns. It cannot fully explain their meaning.

The limitation is not just communication quality. It is that these systems either need highly sensitive access to understand the work, or they operate with partial context that can easily be misread.

4. HR & People Analytics Moving Into Knowledge Management

Workday, Eightfold, Gloat, VisierCore strength: Structured people data at scale: headcount, skills, performance history, and workforce planning. These platforms are useful for understanding the formal organization and are increasingly pushing toward expertise and talent visibility.

Where they still stop: HR and people analytics know the official organization. They do not fully capture how work and decisions actually route in practice. Skills data may show what someone is qualified to do on paper, but not who others trust when the stakes are high. Org charts show reporting lines, but not whose informal approval actually moves work forward.

That gap becomes visible in everyday operations. A system may see three senior counsel with similar titles and route a contract based on workload or availability. But in practice, one specific counsel may be the person everyone relies on for a certain type of vendor agreement because she built the playbook and her judgment is trusted. The official structure is accurate. The routing is still wrong.

The limitation is not people data itself. It is that formal people data does not fully capture behavioral reality. The org chart shows the structure. It does not show the real path of decision making.

---

The Common Wall: Two Tiers That Every Category Builds On

All four categories share the same blind spot: they are working from only two tiers of organizational data.

Tier 1, Structural: Org charts, reporting lines, formal titles. Static. They reflect intended structure, not actual behavior.

Tier 2, Transactional: Documents, tickets, emails, and meeting notes. Historical. They capture what was recorded, not always what is happening now.

AI systems built on Tier 1 and Tier 2 mostly understand the official version of the organization. They route a decision to the VP of Finance because that is the formal owner on paper, not because that is who actually approves budget in practice. They surface the latest policy document, not the informal escalation path people have actually been using.

The enterprise AI stack requires three layers to function inside real organizations: the model layer (reasoning and language), the content and data layer (documents, knowledge bases, RAG), and the organizational context layer (behavioral mapping of networks, expertise, and workflow). Research on scaling agent systems confirms that architecture and coordination strategy matter as much as model capability. Models provide intelligence. Data provides information. Organizational context ensures correct AI action. Most stacks today are missing that third layer entirely.

Take an engineering team with a published RFC process, formal review steps, logged comments, and tracked approvals. The system can see the documented workflow. What it may miss is that critical concerns are often resolved through trusted informal channels before anything is finalized. The record shows the formal process. It does not fully capture the real decision dynamics around it.

Some vendors are beginning to capture fragments of this missing layer. Glean, Sweep, and SymphonyAI are building context and decision graphs from behavioral signals, and Foundation Capital has called context graphs a trillion-dollar opportunity. But most context graphs still reflect only the slice of behavior visible inside a single platform. What is still missing is a cross-system organizational behavioral layer derived from real work patterns, continuously updated, and governed in a way that makes it usable for enterprise AI. That is not just a feature gap. It is a data gap.

In practical terms, this layer extends enterprise search from document retrieval to human resolution. When files are not enough, it helps AI find the right person, detect likely bottlenecks, and choose safer escalation paths. That is especially important in urgent, ambiguous, or edge case situations where the answer is only partly documented or where execution depends on human judgment.

The three tiers of organizational data. Most enterprise AI operates on Tier 1 and Tier 2. Tier 3 is where decisions actually live.

The three tiers of organizational data. Most enterprise AI operates on Tier 1 and Tier 2. Tier 3 is where decisions actually live.

---

How Organizational Network Analysis and the Behavioral Layer Help Address the Gap

Tier 3, Behavioral: Communication patterns, collaboration signals, practical escalation paths, and how work is routed in real operating conditions. Dynamic. Reflects how the organization works in practice, not just how it is formally described.

The methodology behind Tier 3 is Organizational Network Analysis (ONA). ONA maps actual patterns of communication and collaboration across an organization: who works with whom, how often, in what context, and through which channels. It looks beyond the org chart to the operating network of the business. ONA has existed for decades, but it was traditionally periodic, retrospective, and expensive. By the time the analysis was delivered, the organization had often already changed.

The architectural shift here is to treat ONA not as a one time report, but as continuously updated infrastructure built from approved, governed collaboration signals so the resulting context can support enterprise AI in a more current and operationally useful way. For a deeper look at how this fits into the modern agent stack — alongside the harness, model, and user-context layers — and why hard-coded permission systems are not a substitute, see Harnesses Run Agents. Organizational Context Helps Them Act Correctly.

The models behind this layer are built in collaboration with behavioral scientists. Organizational patterns are translated into mathematical models: relevance scores, capacity indicators, routing weights, and escalation paths. These are explainable equations, not opaque neural network outputs. When the system recommends a routing decision, every variable is defined and every weight has a rationale. For organizations that want to understand the specifics, we work directly with governance and security teams to walk through the inputs, the equations, and the outputs.

A behavioral context layer should not be framed as unrestricted visibility into all communications or as a mechanism for bypassing policy. It should be framed as a governed layer that helps systems make better recommendations within existing permissions, escalation rules, and human oversight. The purpose is not to let AI decide anything it should not decide. The purpose is to help AI know when documents are enough, when a human should be involved, and which approved paths are most relevant.

The Behavioral Knowledge Graph: governed behavioral signals structured as live, queryable context

Two classes of signal: Passive ONA and Active ONA

Not all behavioral signals mean the same thing, and treating them as interchangeable creates risk. Passive ONA reflects structural traces from collaboration systems: meeting co-attendance, response timing, file collaboration, and workflow timestamps. It shows patterns of interaction. Active ONA reflects validated human input: confirmed trust relationships, perceived expertise, or reliance signals gathered through lightweight prompts or surveys. It helps distinguish activity from meaning.

The key point is that high activity does not necessarily mean high trust, high authority, or decision ownership. Two people who communicate frequently may simply be caught in the same workflow, not serving as meaningful decision partners. A system that equates communication volume with influence can produce a misleading picture of the organization, and that error can distort downstream recommendations. So behavioral context should be used to improve routing, prioritization, and escalation support, not as a sole basis for autonomous action.

Trust density: making behavioral context more queryable

What makes behavioral context more useful is not raw activity, but the ability to distinguish stronger, validated working relationships from simple interaction volume. Trust density is one way to measure this: confirmed trust ties divided by total interaction opportunities. High activity with low confirmed trust suggests caution. High trust density suggests a group whose recommendations or coordination patterns may carry more practical weight. In practice, these signals should support human review, prioritization, and escalation decisions, not replace formal approval structures.

Silence is also a signal

Traditional ONA often treats nonresponse as missing data. In practice, it can indicate something important. High collaboration load combined with nonresponse may suggest overload. Low visible activity combined with nonresponse may suggest disconnection, uncertainty, or low engagement. Used carefully, these patterns can help the system avoid over-routing work to overloaded people and help flag cases where additional human attention may be needed. These signals should remain advisory and should always be interpreted within governance and workflow context.

The full picture: Semantic KM + Behavioral KM

The semantic layer tells AI what something is. It may know that Project Alpha is a strategic initiative, tied to Q4 priorities, and formally owned by the Product organization. What it may not know is who people actually rely on for judgment, who should be consulted when the documentation is incomplete, or where decisions tend to route in practice.

The behavioral layer adds that people-side context: who is relied on, where expertise is recognized, and how work is actually coordinated. Semantic KM is the content layer. Behavioral KM is the operating context layer. Together, they help enterprise AI produce outputs that are not only relevant, but better aligned with how the organization actually functions.

Behavioral Graph RAG: more organizationally grounded answers

Standard RAG operates entirely at the content layer. It retrieves semantically relevant documents but has no awareness of who should receive the answer, who should be consulted next, or when a human needs to be in the loop. BehaviorGraph augments RAG pipelines by injecting behavioral context at retrieval time — routing weights, trust density scores, capacity indicators, and escalation paths drawn from the live organizational graph.

BehaviorGraph is not a RAG system itself. It is a graph layer that integrates with existing AI infrastructure: as a data source injected into RAG queries, as an MCP server that agents can call at runtime, or as a direct API. The integration point depends on the system; the function is the same: supply the organizational context that semantic retrieval cannot provide on its own.

A support rep asking about the product roadmap may not need the entire internal planning history. A better system can surface the materials appropriate to that person's role and context, and when the issue goes beyond what documents can resolve, prompt the right human review path. The answer is not just factually relevant. It is more operationally grounded and governance-aware.

In an ideal model, enterprise search should not just retrieve documents. It should help the right resource reach the right person, and know when a human needs to be brought into the loop. If a document does not resolve the issue, the system should support a safe next step: flag the relevant expert, summarize the issue, or recommend other qualified people within approved policy boundaries. If the primary expert is overloaded, the system should not pretend the answer is unavailable or act beyond its authority. It should recommend alternative reviewers or escalation paths while keeping human judgment in control.

The Behavioral Knowledge Graph: ONA as continuous infrastructure for enterprise AI

The Behavioral Knowledge Graph: ONA as continuous infrastructure for enterprise AI

---

The Research Behind This Approach

This approach is grounded in Yumi Kimura's five-year research on a core enterprise AI problem: why AI systems often fail organizationally, not technically. Conducted through Columbia University's Information and Knowledge Strategy program under the supervision of Katrina Pugh, Ph.D., the work led to the Organizational Intelligence Loop (OIL) (academic paper), a framework for what enterprise AI needs from an organization to work reliably.

OIL maps four context layers: People (expertise, trust, influence), Information (ownership, provenance), Process (how work and decisions actually move), and Agentic AI Design (policy-aware, permission-aligned context). It is grounded in data from about 1,400 organizational deployments and nearly half a billion data points, including calendar events, presence signals, peer links, and surveys across industries. The framework explains why some tools and behaviors get adopted while others stall.

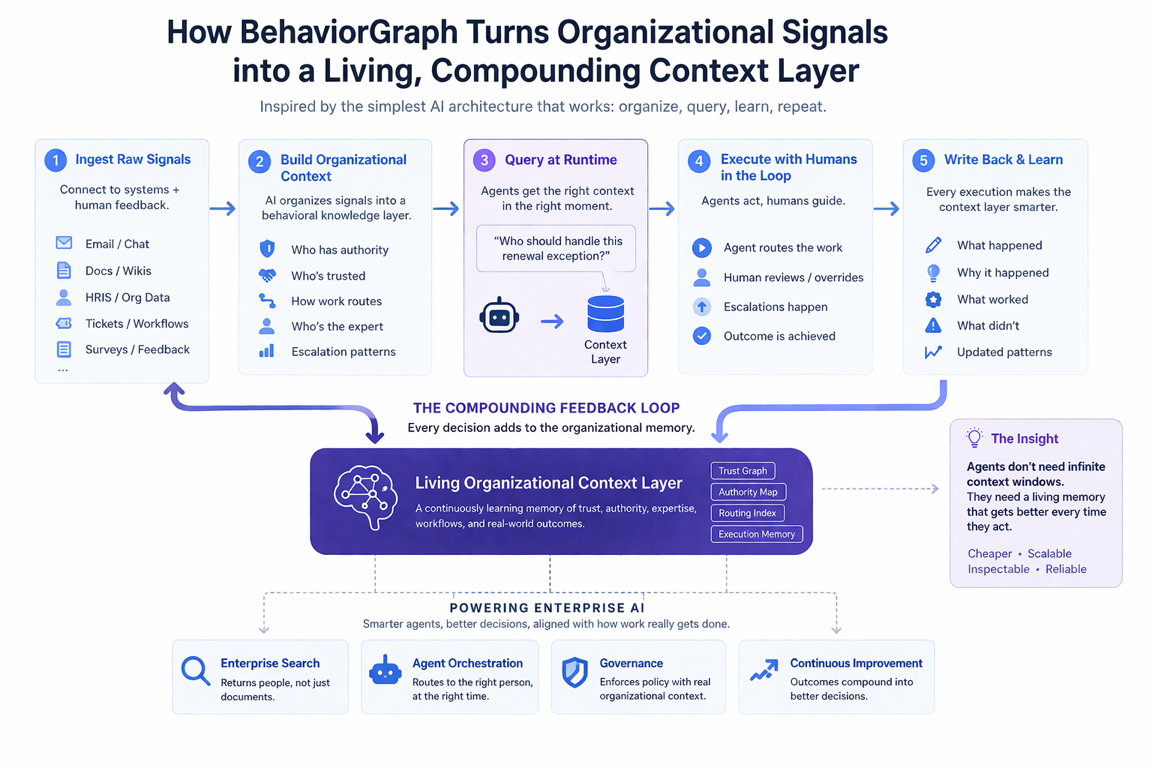

How BehaviorGraph turns organizational signals into a living, compounding context layer

How BehaviorGraph turns organizational signals into a living, compounding context layer

BehaviorGraph is the operationalization of these principles as continuously updated infrastructure, making organizational behavioral context queryable at runtime for enterprise AI systems.

---

A Note on Privacy: Less Invasive Than the Alternative

A fair concern is whether mapping behavioral signals amounts to workplace surveillance. The short answer is no, and the contrast with the status quo makes that clear.

Foundation models and enterprise AI platforms typically require access to emails, meeting transcripts, documents, and message history to function well. They read the content of communications. BehaviorGraph works differently: it operates primarily on metadata, collaboration patterns, and governed organizational signals, rather than on the content of what people say. It does not need to read emails. It observes structure: who communicates with whom, how often, and who tends to be involved in certain types of decisions.

This is an architectural constraint, not a configuration toggle. BehaviorGraph never reads, processes, or stores message content, email bodies, or document text. Operating on metadata by design simplifies security review and accelerates data governance approval.

In practice, BehaviorGraph's API, MCP, and RAG endpoints use coded identifiers (e.g. LEG-07, ENG-01) rather than employee names. Identity resolution is handled by the customer's internal systems with their own access controls. This separation prevents misuse for individual performance evaluation while keeping the behavioral context fully usable for routing and escalation.

That also matters from a governance perspective. Behavioral context should not be used as a shortcut around policy or permissions. In BehaviorGraph's design, the system is paired with agent guardrails and a peer-to-peer agent governance protocol that are dynamic, not one-size-fits-all.

Beyond data minimization, there is a deeper point about fairness. Human judgment in organizations carries significant and often hidden bias. Change management practitioners have long documented three layers of resistance to organizational change: "I don't understand it," "I don't like it," and "I don't like you." That third layer, personal bias, political friction, and in-group or out-group dynamics, shapes a surprising amount of routing and decision making in real organizations, often outside any formal policy. A system that draws on behavioral patterns rather than personal preference can be more consistent and less vulnerable to that layer than human judgment alone.

Behavioral context does not prescribe action. It surfaces signals and reasons; the organization still decides what to do. Trust density is not a performance review. Nonresponse patterns are not HR flags. They are inputs that AI systems can use to route more carefully and escalate more appropriately, and that organizational leaders can use to understand where AI deployments are hitting real structural limits.

---

Why Behavioral Signals Can Generalize Across Organizations

A fair question is why behavioral signals from one organization would help in another. The answer is that while every company is different, many coordination patterns repeat. Informal authorities emerge beyond formal titles. High-trust networks move faster. Low-trust, high-communication environments create friction. Overload often shows up in delayed response and stalled routing. The people and context change, but the underlying patterns often do not.

BehaviorGraph's model is built on data from about 1,400 organizational deployments and close to half a billion data points. That gives it a broader starting point for understanding how work tends to route in enterprise settings. The value is not that it already knows a specific organization. The value is that it helps reduce the cold-start problem. From there, the model becomes more useful as it is configured and refined within the organization's own approved environment and data boundaries.

This matters because most companies cannot build a behavioral context layer on their own. It requires years of data, cross-organizational pattern recognition, and the ability to work with sensitive behavioral signals under strong governance constraints. A cross-organizational base model provides a starting point. Organization-specific signals make it useful in practice.

---

Why the Behavioral Context Layer Must Be Cross-Platform

Most existing systems only see one slice of the organization. Search platforms see documents. HR systems see formal structure. Productivity tools see activity inside their own environment. But enterprise workflows do not stay inside one system. A single task may pull from documents, messaging tools, calendars, workflow systems, and people data at once.

That is why the behavioral context layer cannot live inside one platform alone. To understand how work actually moves, it has to sit across systems, where coordination, collaboration, and routing patterns become visible over time.

---

Why AI Governance Requires This Layer

Enterprise AI is moving quickly from copilots to agents. Gartner predicts 40% of enterprise apps will feature task-specific AI agents by 2026, up from less than 5% in 2025. As agents begin routing decisions, escalating issues, and initiating workflows, governance cannot rely on formal policy alone. The system also needs to understand how work is actually handled in practice: who is typically involved, how decisions usually route, when a case is sensitive or exceptional, and when automation should defer to human review. Tier 1 and Tier 2 do not capture that reliably.

This becomes more urgent as enterprises move from single agents to multi-agent deployments across departments. When multiple agents operate independently, each one makes routing and escalation decisions without a shared understanding of the organization. A shared behavioral context layer gives orchestration platforms the organizational awareness they need: who owns which decisions, where handoffs should go, and when a human must be in the loop.

In engineering, an agent may route a production incident correctly according to the on-call schedule, yet still miss the person everyone relies on in critical cases. In sales, it may escalate a compensation issue to the formal owner, while missing the unwritten review path leadership actually follows. In legal, it may surface information in a way that is technically permitted by static rules but inappropriate in context and better handled through human review. The formal record may be accurate. The operational judgment can still be wrong.

That is the governance gap. Formal policy matters, but without behavioral context, systems still miss the informal reliance patterns, exception paths, and real coordination logic that shape decisions inside organizations. A behavioral layer helps the system recognize when standard automation is not enough and when a qualified person or approved review path should be brought in.

---

Conclusion: The Iron Argument

The real race is not between enterprise search, productivity AI, HR analytics, and foundation models. They are all moving toward the same missing layer, whether they realize it or not. Search wants to answer questions and act on them. Productivity AI wants to take action on behalf of users. Foundation models want to power enterprise workflows. People analytics wants to inform decisions. All of them eventually hit the same wall: the system does not know how the organization actually works.

More documents give you a richer Tier 2. A better org chart gives you a cleaner Tier 1. A more capable model gives you better reasoning over the context it is given. None of that creates Tier 3, because the behavioral layer, at this scope, continuously and across systems, still has not been structured in a way AI systems can query reliably.

The only path to a truly trustworthy enterprise AI system is a behavioral layer that helps capture how work is actually coordinated, who is typically relied on in practice, how decisions tend to route, and what tacit knowledge shapes outcomes.

That is the Organizational Behavioral Context Layer for Enterprise AI. It is not a feature. It is a category. And it is still largely missing.